Zhihan YangML PhD Student, Cornell CS |

I'm a 2nd-year PhD student at Cornell CS, where I'm fortunate to work with Prof. John Thickstun on machine learning and generative models. I'm interning at NVIDIA GenAIR. I interned at MBZUAI Institute of Foundation Models working with Hector Liu and Subham Sahoo (Summer 2024). I have a BA in Mathematics and a BA in Statistics from Carleton College. During those years, I was fortunate to work with Seth Cooper on generative models, Anna Rafferty on bandits, Christopher Amato on deep RL, and Adam Loy and Claire Kelling on MCMC algorithms and Gaussian Processes. I received the CRA Outstanding Undergraduate Researcher Award. |

Publications

I specialize in developing principled, controllable and efficient generative models for various modalities.

|

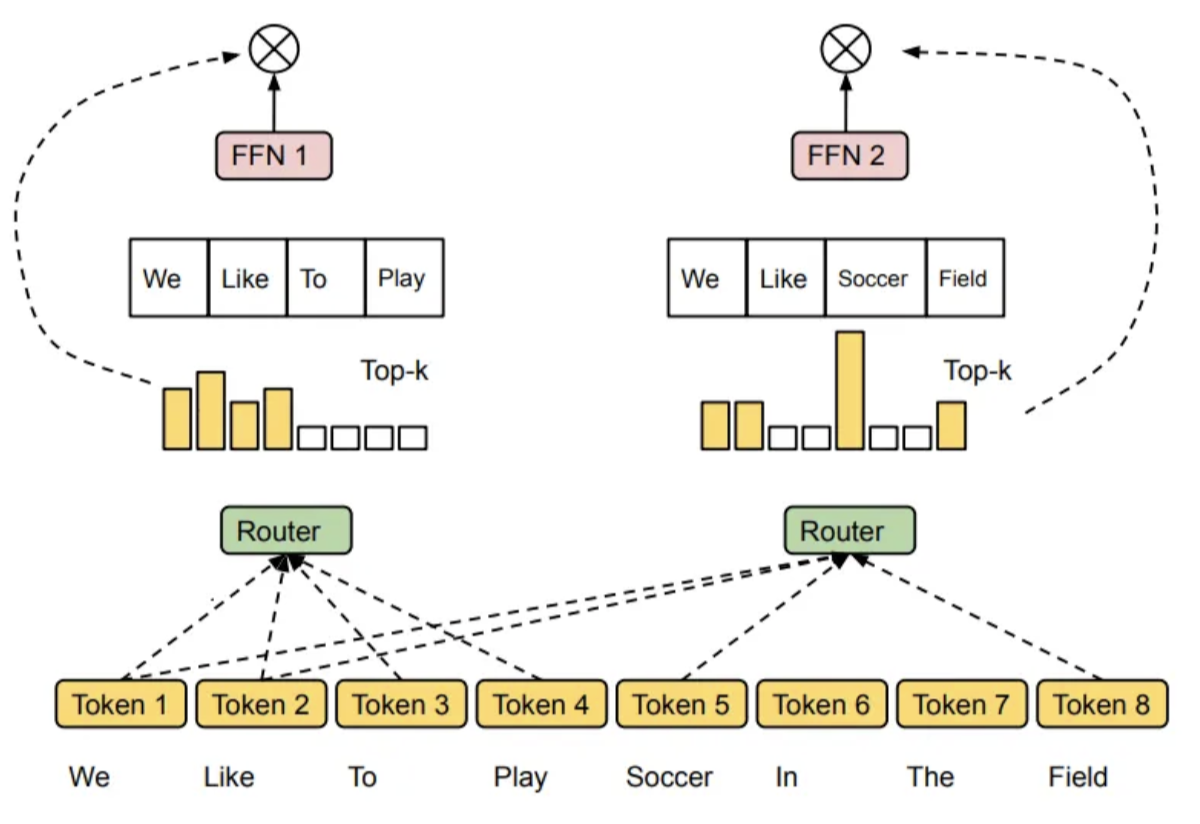

Expert-Choice Routing Enables Adaptive Computation in Diffusion Language ModelsShuibai Zhang*, Caspian Zhuang*, Chihan Cui*, Zhihan Yang, Zhangzhi Peng, Yanxin Zhang, Haoyue Bai, Zack Jia, Yang Zhou, Guanhua Chen, Ming Liu Under review at COLM 2026 arxiv / code We establish EC routing as a superior paradigm for DLM MoE models. |

|

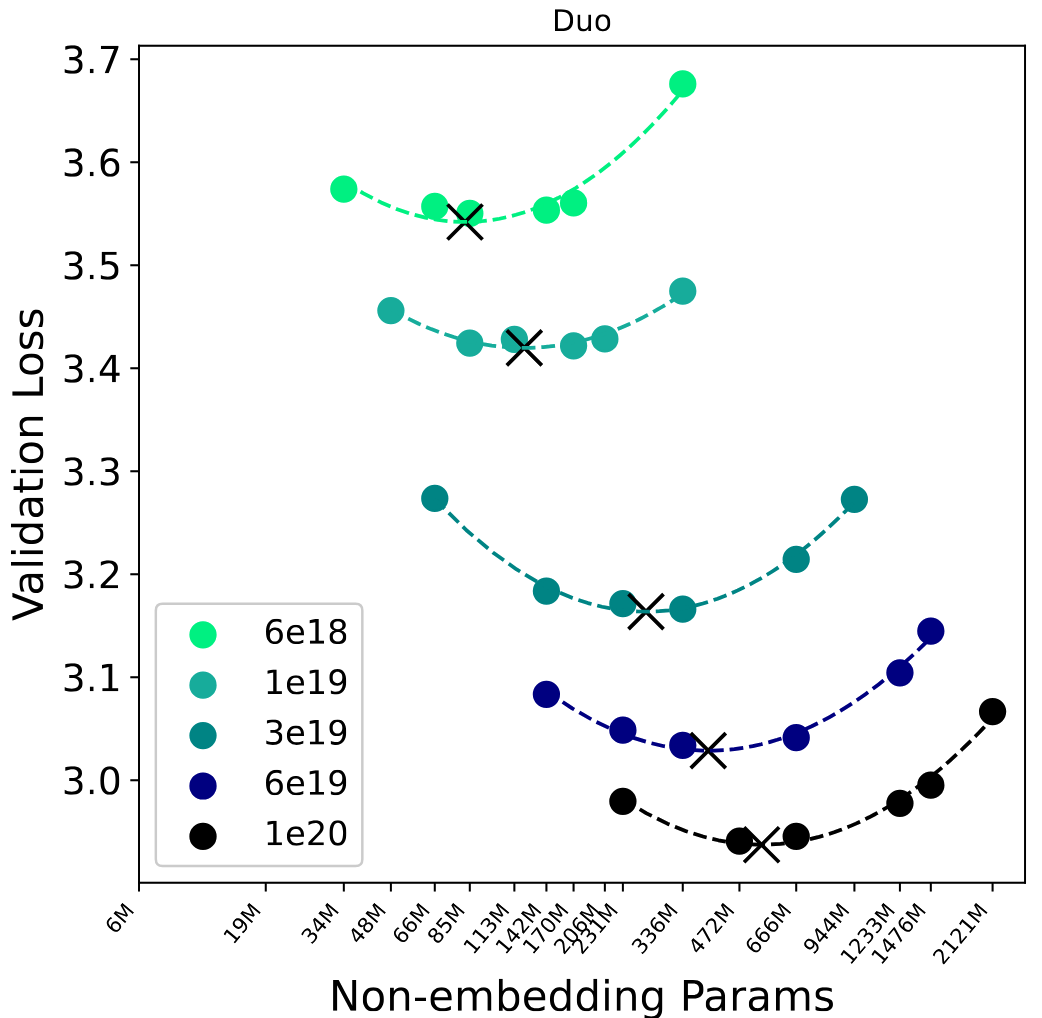

Scaling Beyond Masked Diffusion Language ModelsSubham Sekhar Sahoo, Jean-Marie Lamercier*, Zhihan Yang*, Justin Deschenaux* (Joint Second Authors), Jingyu Liu, John Thickstun, Ante Jukić ICML 2026 arxiv / code / website / twitter / my talk We demonstrate that uniform-state diffusion could beat masked diffusion on likelihood evaluation benchmarks and GSM8K. I led the full SFT pipeline for AR, MDLM, and Eso-LMs. |

|

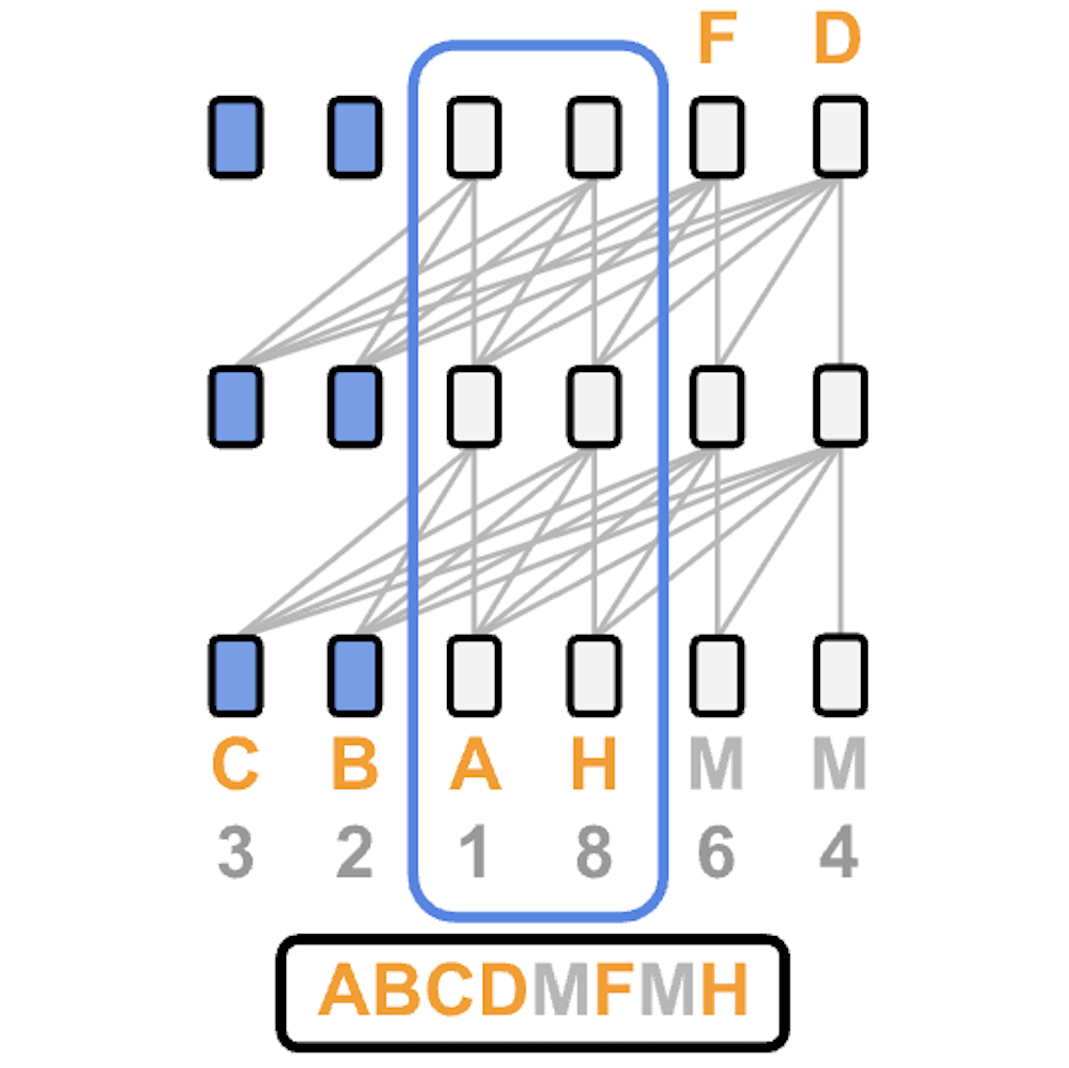

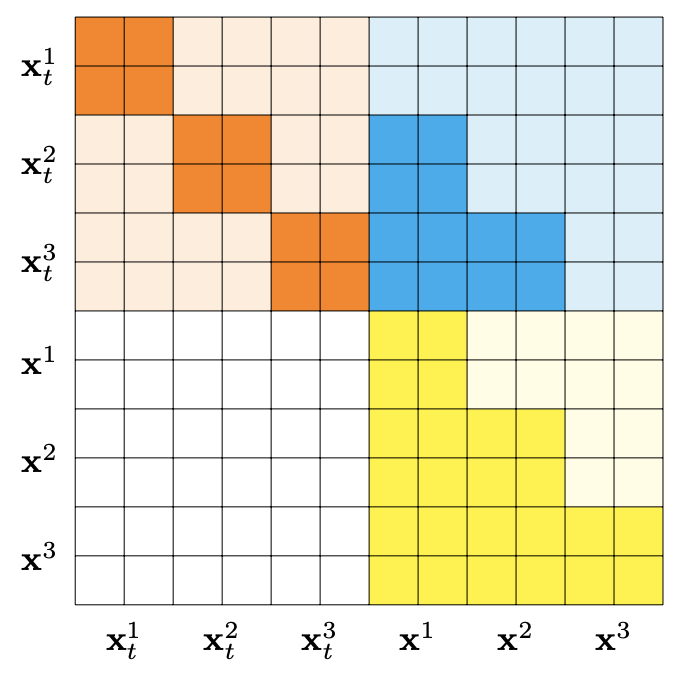

Esoteric Language Models: Bridging Autoregressive and Masked Diffusion LLMsZhihan Yang*, Subham Sekhar Sahoo* (Joint First Authors), Yash Akhauri†, Johnna Liu†, Deepansha Singh†, Zhoujun Cheng† (Joint Second Authors), Zhengzhong Liu, Eric Xing, John Thickstun, Arash Vahdat ICML 2026, Oral at ICLR 2026 - Workshop on Multimodal Intelligence arxiv / code / website / twitter / my talk We are the first to propose KV-caching for masked diffusion language models. |

|

Block Diffusion: Interpolating Between Autoregressive and Diffusion Language ModelsMarianne Arriola, Aaron Gokaslan, Justin Chiu, Zhihan Yang, Zhixuan Qi, Jiaqi Han, Subham Sahoo, Volodymyr Kuleshov ICLR (Oral), 2025 arxiv / code / website We introduce a class of block diffusion language models that interpolate between discrete denoising diffusion and autoregressive models. |

|

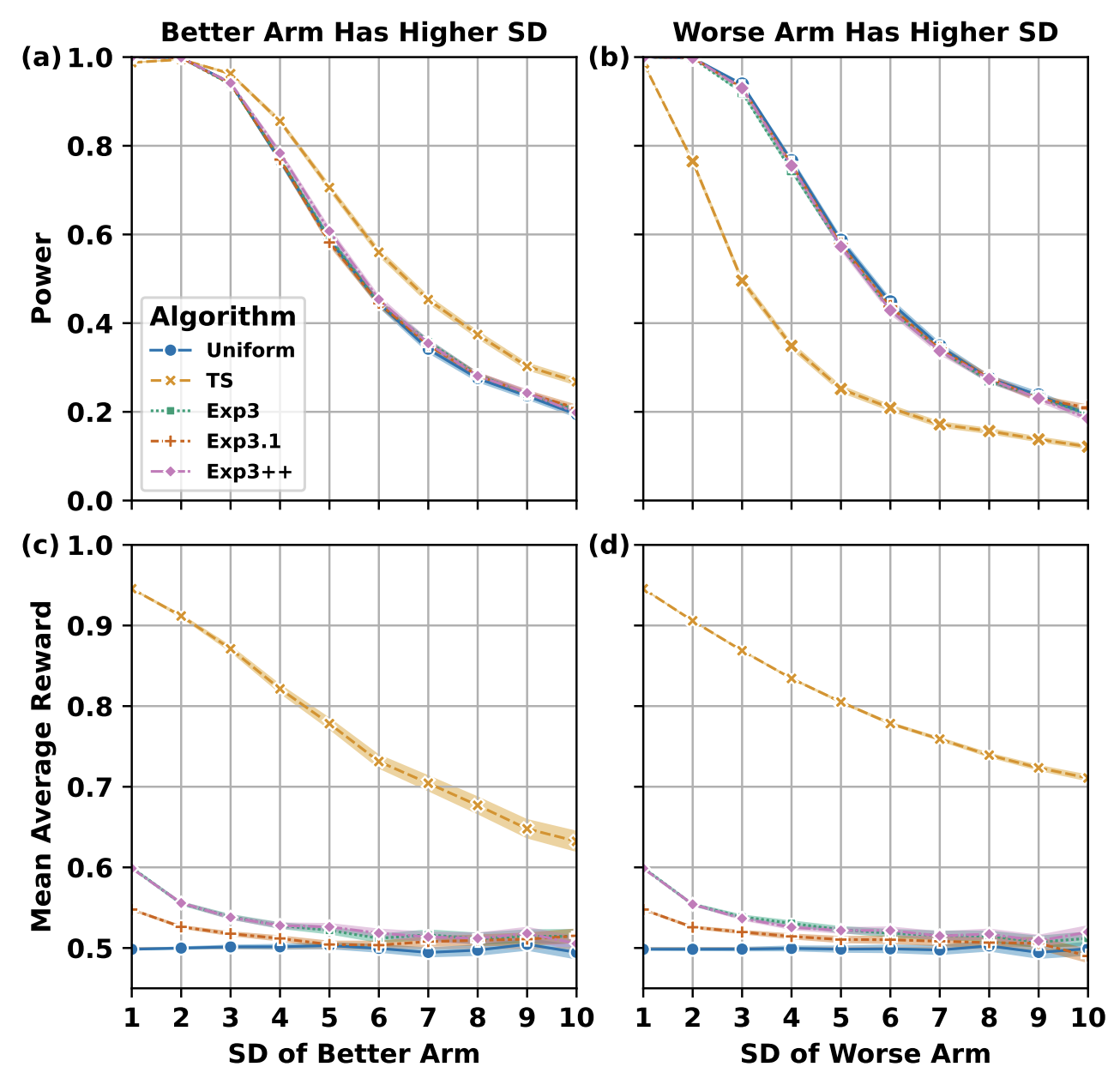

Adversarial Bandits for Drawing Generalizable Conclusions in Non-Adversarial Experiments: An Empirical StudyZhihan Yang, Shiyue Zhang, Anna Rafferty EDM (Short Paper), 2022 arxiv / code / website We empirically analyse how adversarial bandit algorithms can enhance the reliability of conclusions drawn from large-scale educational experiments. |

|

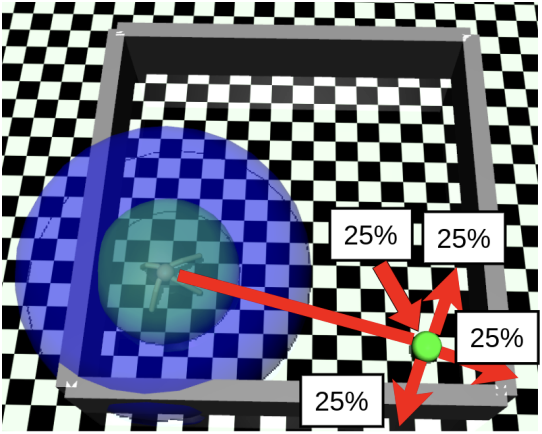

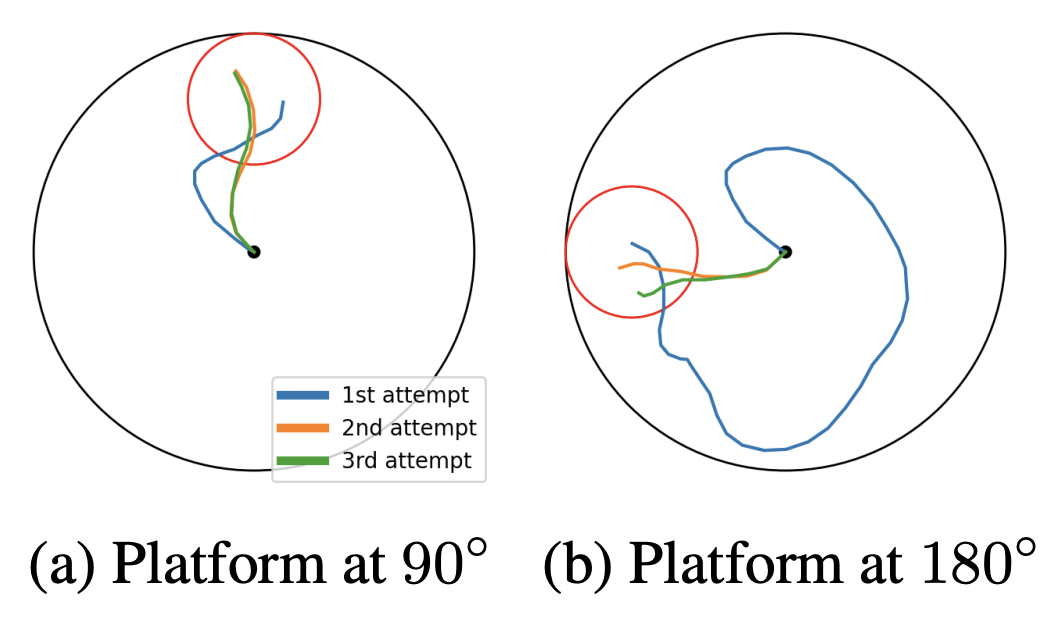

Hierarchical Reinforcement Learning under Mixed ObservabilityHai Nguyen*, Zhihan Yang* (Joint First Authors), Andrea Baisero, Xiao Ma, Robert Platt†, Christopher Amato† (Joint Senior Authors) WAFR, 2022 arxiv / website We present a hierarchical RL framework that handles mixed observability settings, enabling modular policies that scale to complex robotic tasks. |

|

Recurrent Off-policy Baselines for Memory-based Continuous ControlZhihan Yang*, Hai Nguyen* (Joint First Authors) Deep RL Workshop @ NeurIPS, 2021 arxiv / code We establish strong recurrent off-policy baselines for tasks requiring long-term memory. |

|

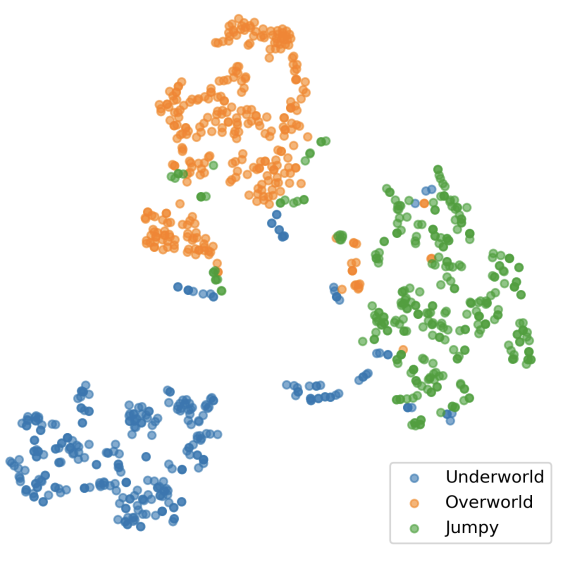

Game Level Clustering and Generation using Gaussian Mixture VAEsZhihan Yang, Anurag Sarkar, Seth Cooper AIIDE (Oral), 2020 arxiv We leverage the Gaussian-Mixture VAE framework to cluster game levels in an unsupervised manner and synthesize novel game levels from the learned clusters. |

Other ProjectsUpcoming. |

|

Design and source code from Jon Barron's website © All rights reserved by Zhihan Yang |